Intel’s Microprocessor Evolution: How Far Have We Come?

Microprocessors have come a long way since their inception in the 1970s, and Intel has been at the forefront of this evolution. With each new generation, microprocessors have become increasingly more powerful, efficient, and versatile. In this article, we will take a comprehensive look at Intel’s microprocessor evolution, tracing its history from the 4004 to the latest 11th Gen Intel® Core™ processors. Along the way, we will explore the key technologies and innovations that have fueled this evolution, and discuss where we can expect microprocessors to go in the future.

The Early Days: The 4004 and 8008

- The Origins of the Microprocessor In the early 1970s, the personal computer revolution was in its infancy and computers were large, extremely expensive, and complex. Microprocessors provided the solution for more affordable, simpler, and smaller computers. The concept of a microprocessor was proposed in 1969 by Ted Hoff, Federico Faggin, and Stanley Mazor. Intel was a leader in the emerging microprocessor industry and was quick to capitalize on the potential of this new technology.

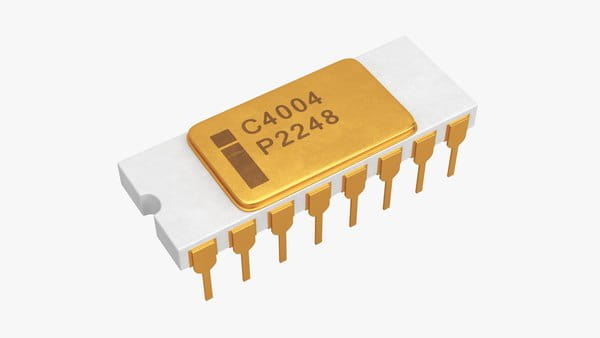

Introducing the 4004 in 1971, the 4004 was Intel’s first microprocessor. It was a 4-bit CPU, running at a speed of 740kHz and capable of processing 60,000 instructions per second. The 4004 was designed for use in calculators and other small devices and was hailed as a significant breakthrough at the time.

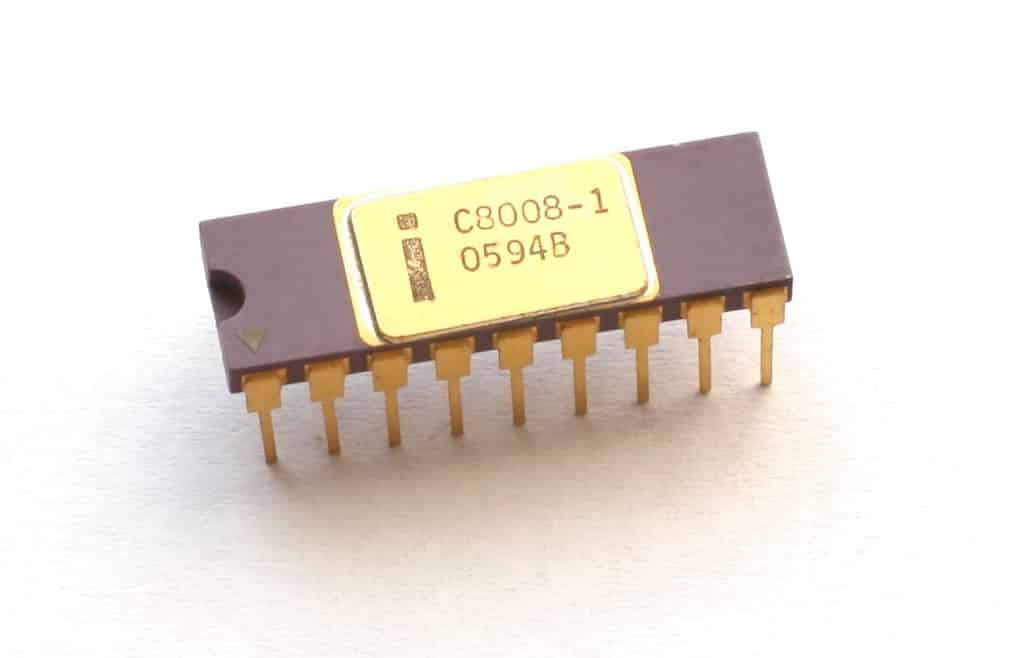

The 8008: Intel’s First 8-Bit Processor The 8008, released in 1972, was Intel’s first 8-bit microprocessor. It was a significant improvement over the 4004, running at a speed of 800kHz and capable of processing 100,000 instructions per second. The 8008 was used in a variety of applications, including computer terminal controllers and industrial control systems.

The Rise of the x86 Architecture: The 8086 and 80286

- The x86 Architecture The x86 is a family of instruction set architectures developed by Intel. It has become the dominant computer architecture for personal computers. The x86 architecture has undergone numerous revisions and improvements over the years, but its basic structure has remained largely unchanged.

- The 8086: Intel’s First 16-Bit Processor The 8086 was Intel’s first 16-bit microprocessor, released in 1978. It was a major improvement over the 8008, running at a speed of 5MHz and capable of processing 640,000 instructions per second. The 8086 was used in the first IBM PCs and established Intel’s domination of the personal computer market.

- The 80286: A Major Step Forward in Performance The 80286, released in 1982, was a significant improvement over the 8086. It was the first microprocessor to support protected mode, which allowed multiple programs to run simultaneously without interfering with each other. The 80286 ran at 6 or 8MHz and could process up to 1.2 million instructions per second.

The Pentium Era: Pentium, Pentium Pro, and Pentium II

- Introducing the Pentium Processor The Pentium processor, released in 1993, was a major breakthrough in microprocessor technology. It was the first processor to use the superscalar architecture, which allowed it to execute multiple instructions at once. The Pentium processor ran at speeds of up to 300MHz and could process up to 100 million instructions per second.

- The Pentium Pro: Intel’s First x86-64 Processor The Pentium Pro, released in 1995, was Intel’s first x86-64 processor. It was designed for high-end servers and workstations and was capable of addressing up to 64GB of memory. The Pentium Pro was a significant improvement over its predecessor in terms of processing power and performance.

- The Pentium II: A New Level of Integration The Pentium II, released in 1997, was a major advance in microprocessor technology. It was the first processor to use Intel’s Slot 1 architecture, which integrated the processor and cache onto a single module. The Pentium II ran at speeds of up to 450MHz and could process up to 300 million instructions per second.

The Core Revolution: Core, Core 2, and Beyond

- The Core Architecture The Core architecture, introduced in 2006, was a major redesign of Intel’s microprocessor line. It was the first architecture to use Intel’s Hyper-Threading technology and the first to use multiple cores. The Core architecture improved performance and energy efficiency while reducing power consumption.

- The Core Duo: A Breakthrough in Energy Efficiency The Core Duo, released in 2006, was a breakthrough in microprocessor energy efficiency. It was the first processor to use Intel’s 65nm manufacturing process, which greatly reduced power consumption. The Core Duo ran at speeds of up to 2GHz and could process up to 32 billion instructions per second.

- The Core 2: A Major Boost in Performance The Core 2, released in 2006, was a significant improvement over the Core Duo. It was the first processor to use Intel’s 45nm manufacturing process, which further improved energy efficiency while boosting performance. The Core 2 ran at speeds of up to 3GHz and could process up to 80 billion instructions per second.

- The i3/i5/i7/i9 Series: The Next Generation of Processors The i3/i5/i7/i9 series, launched in 2008, introduced a new naming convention for Intel’s microprocessors. These processors were designed for different levels of performance and included features like Turbo Boost and Hyper-Threading. The i9 series includes up to 18 cores and can process up to 3.6 billion instructions per second.

The Future of Microprocessors: What Lies Ahead?

- Moore’s Law and the Future of Scaling Moore’s Law, which states that the number of transistors on a microprocessor will double every two years, has driven the microprocessor industry for decades. While there are concerns that Moore’s Law may eventually hit a physical limit, the industry is still moving forward with the development of new technologies like quantum computing and photonics.

- The Emergence of New Technologies New technologies like 3D packaging, chipsets, and nanosheets are being developed to increase microprocessor performance and efficiency. These technologies allow multiple components to be stacked on top of each other, reducing the distance between components and improving energy efficiency.

- The Rise of AI and Machine Learning The rise of AI and machine learning is driving the development of microprocessors that are designed specifically for these applications. These processors are optimized for neural networks and can perform complex calculations at lightning-fast speeds.

Frequently Asked Questions

- What is a microprocessor?

A microprocessor is a small computer chip that contains the CPU (Central Processing Unit) of a computer. - How do microprocessors work?

Microprocessors perform arithmetic and logic operations on data stored in memory. The processor retrieves instructions from memory, decodes and executes them, and stores the results back in memory. - What is the difference between a CPU and a microprocessor?

A CPU is a broader term that refers to the entire circuitry that controls a computer. A microprocessor is a specific type of CPU that integrates the processing functions onto a single chip. - What is Moore’s Law?

Moore’s Law states that the number of transistors on a microprocessor will double every two years, leading to exponential growth in processing power. - How long do microprocessors last?

Microprocessors can last for several years, depending on usage and wear and tear. However, they may become outdated as new technologies emerge. - What is the latest generation of Intel processors?

The latest generation of Intel processors is the 11th Gen Intel® Core™ processors. They are designed for high performance and energy efficiency and include features like Thunderbolt™ 4 and Intel® Iris® Xe Graphics.

Conclusion

Intel’s microprocessor evolution has been nothing short of extraordinary, with each new generation building on the success of the last. From the 4004 to the latest 11th Gen Intel® Core™ processors, microprocessors have become increasingly more powerful, efficient, and versatile. With the emergence of new technologies like AI and machine learning, we can expect microprocessors to continue to evolve and advance at an unprecedented rate, shaping the future of computing as we know it.